A Beginning-of-Year Reflection for Enterprise Architects and Technical Leaders

As we step into 2026, it’s worth pausing to reflect on the seismic shifts that defined enterprise architecture in 2025—and the hard lessons learned when AI hype met production reality. What began as breathless excitement around generative AI and LLMs has matured into a more nuanced understanding: building AI proofs-of-concept is easy; operationalizing them at enterprise scale is where most initiatives still fail.

This article reflects on the key architectural lessons from 2025 and outlines what 2026 demands from those of us responsible for building resilient, scalable, and cost-effective enterprise systems.

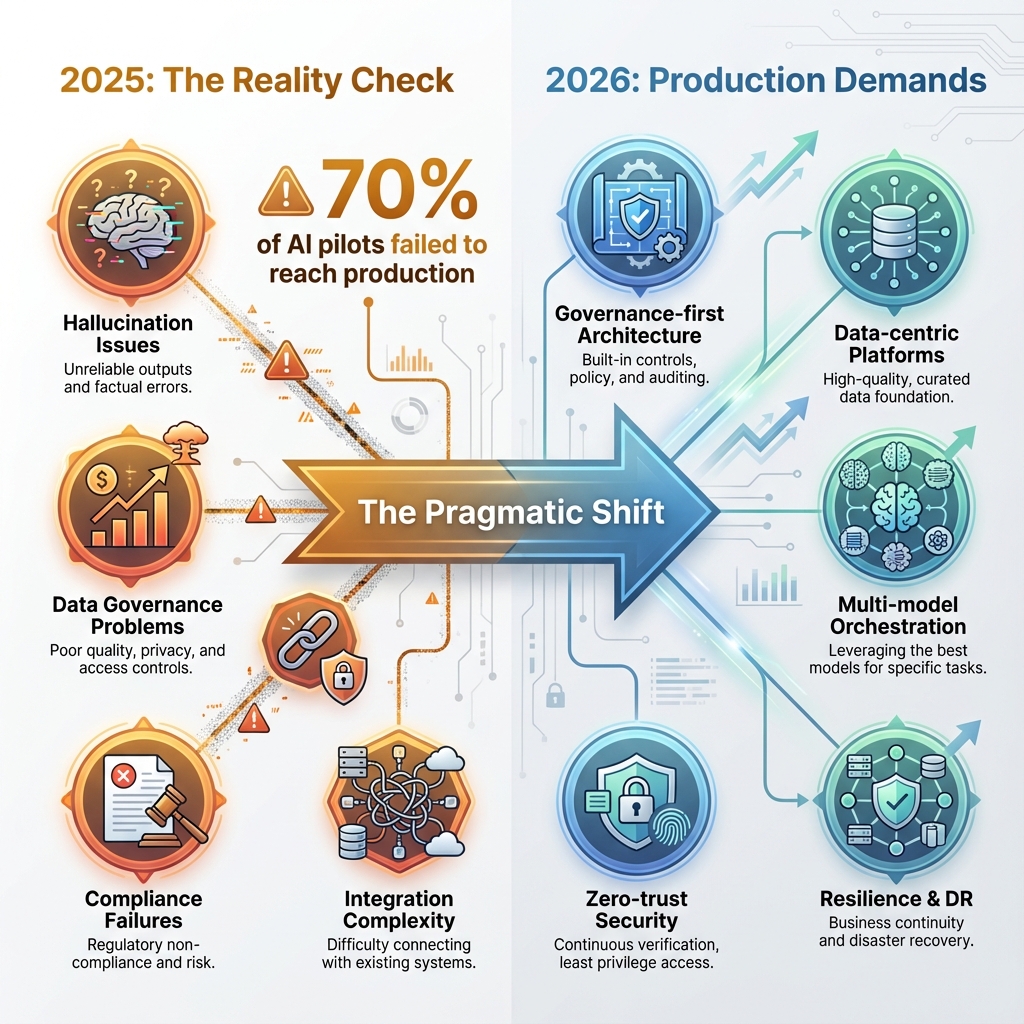

The Reality Check: Only 30% of AI Pilots Reach Production

⚠️ CRITICAL FINDING: The 70% Failure Rate

In 2025, organizations discovered an uncomfortable truth: approximately 7 out of 10 GenAI projects never made it past the pilot stage. The initial euphoria of ChatGPT-like demos quickly gave way to the brutal realities of enterprise deployment:

- Hallucination in production – LLMs confidently generating incorrect information in customer-facing systems

- Cost explosion – What seemed affordable in dev/test became prohibitive at scale

- Data governance nightmares – Legacy systems weren’t designed for the data accessibility AI demands

- Compliance failures – Healthcare (HIPAA), financial services (PCI-DSS), and EU (GDPR) requirements proving incompatible with early AI architectures

- Integration complexity – Bridging the gap between shiny new AI services and decades-old enterprise systems

The architects who succeeded in 2025 weren’t necessarily the most innovative—they were the most pragmatic. They focused on measurable ROI, robust governance, and modular architectures that could adapt as AI technology evolved at breakneck speed.

Five Critical Architecture Lessons from 2025

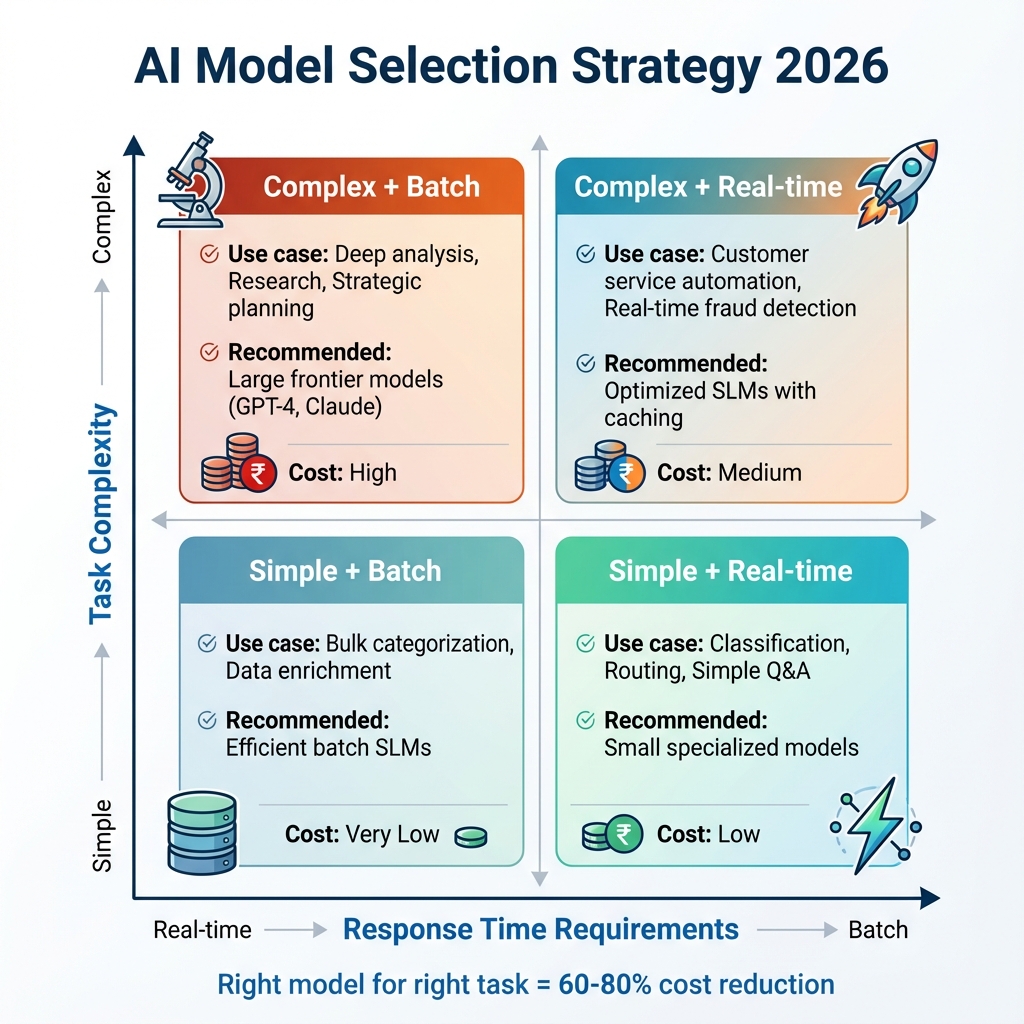

1. Bigger Models ≠ Better Solutions

The industry’s obsession with larger and larger language models hit a wall in 2025. Organizations learned that model size isn’t always correlated with business value. What matters is the right model for the right task.

We observed three key shifts:

- Specialized small language models (SLMs) fine-tuned for specific domains often outperformed general-purpose LLMs on targeted tasks

- Domain-native AI with embedded regulatory compliance and industry context delivered more reliable, trustworthy results

- Cost efficiency dramatically improved with right-sized models—some organizations reduced inference costs by 60-80% through strategic model selection

💡 ARCHITECTURE PRINCIPLE: Model Diversity Over Monopoly

Design your AI architecture to make model selection and swapping trivial. Use abstraction layers, standardized interfaces, and avoid tight coupling to specific model providers. Your platform should treat models as interchangeable components optimized for specific workloads.

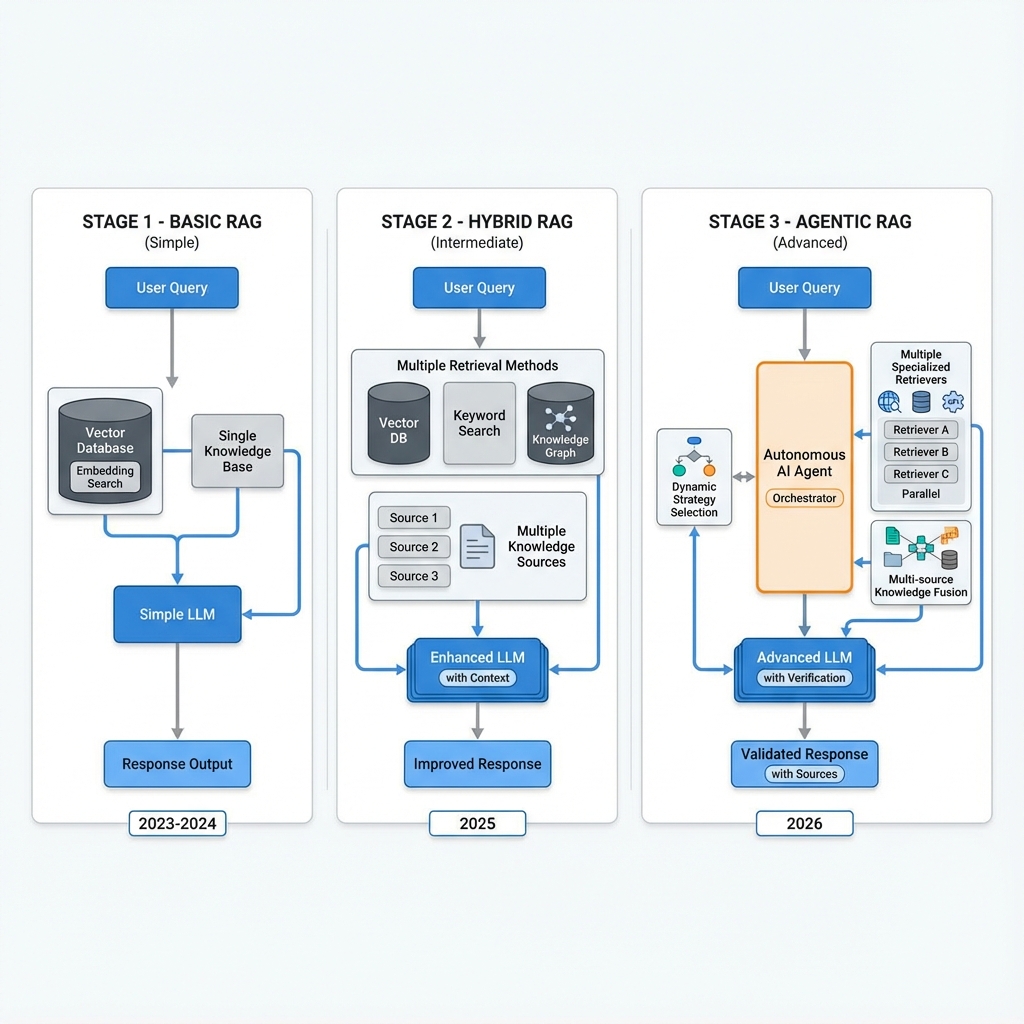

2. RAG is Table Stakes, Not Innovation

Retrieval-Augmented Generation (RAG) transitioned from cutting-edge technique to baseline expectation. By Q4 2025, any production LLM system without RAG was considered architecturally incomplete—like deploying a web application without HTTPS.

The evolution of RAG matured through three distinct phases:

- Basic RAG (2023-2024) – Simple vector search + context injection. Effective but limited by single retrieval strategy.

- Hybrid RAG (2025) – Combining vector search, keyword matching, and knowledge graph traversal. Dramatically improved accuracy for complex queries.

- Agentic RAG (2026) – Autonomous agents that dynamically orchestrate multiple retrieval strategies, validate sources, and adapt based on query characteristics and user context.

🎯 ARCHITECTURE IMPLICATION: Data Architecture First

Plan your data architecture to support evolving RAG patterns from day one. Invest in vector databases (Pinecone, Weaviate, Qdrant), semantic layers, and automated data quality pipelines. These aren’t nice-to-haves—they’re the foundation of trustworthy, hallucination-resistant AI.

Key investments: Vector indexing infrastructure, embedding generation pipelines, metadata enrichment, data versioning, and continuous quality monitoring.

3. Agentic AI: The Next Inflection Point

2025 saw agentic AI move from research labs to production. Unlike simple chatbots or single-purpose models, AI agents demonstrated the ability to:

- Plan complex, multi-step workflows autonomously without explicit instruction for each step

- Coordinate across multiple services and data sources, making intelligent decisions about which systems to query

- Operate within defined guardrails while still demonstrating creativity and problem-solving

- Learn and adapt from operational patterns and user feedback over time

However, with this power came profound new challenges. The biggest question enterprises grappled with: How do you audit an AI agent’s decision when it autonomously orchestrated 15 different microservices, made 23 API calls, and synthesized information from 7 data sources to complete a single task?

The answer: governance and observability must be built into the architecture from day one, not bolted on afterward.

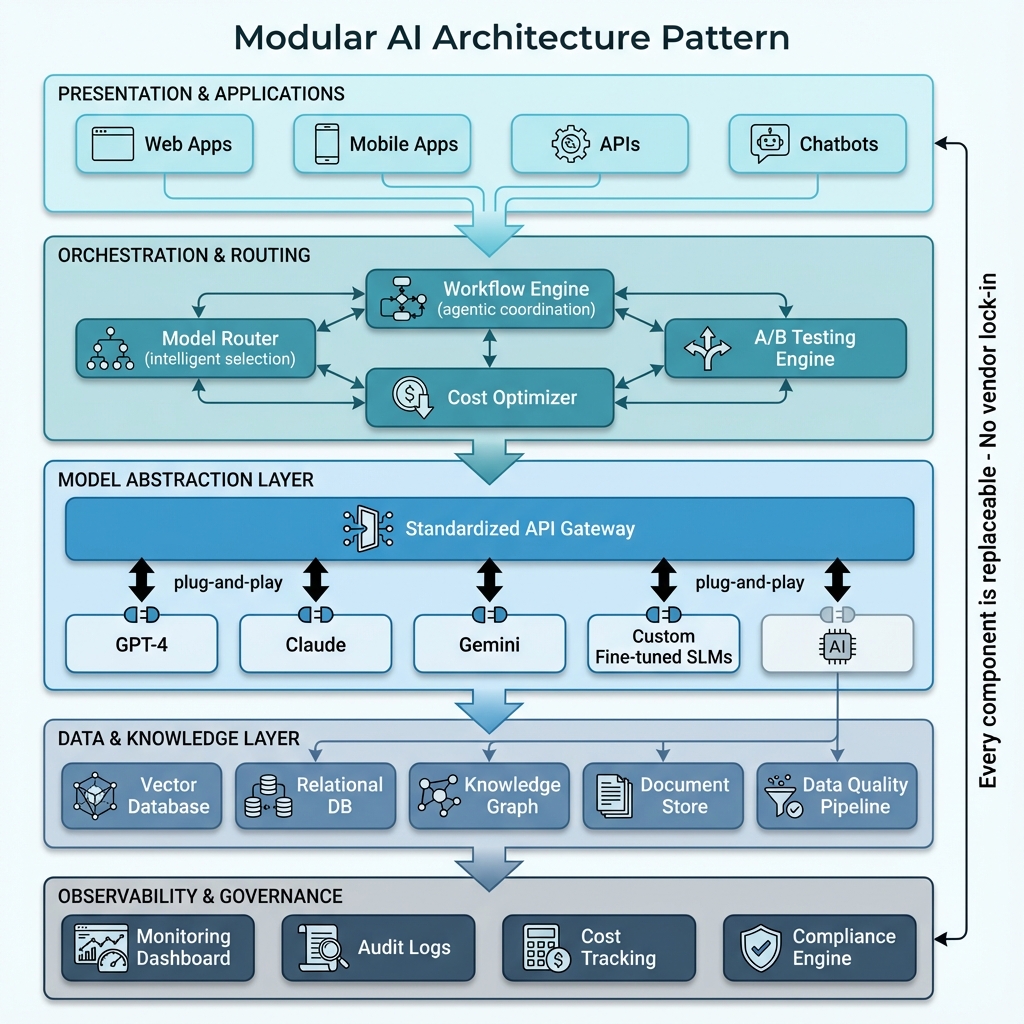

4. The Modular Architecture Imperative

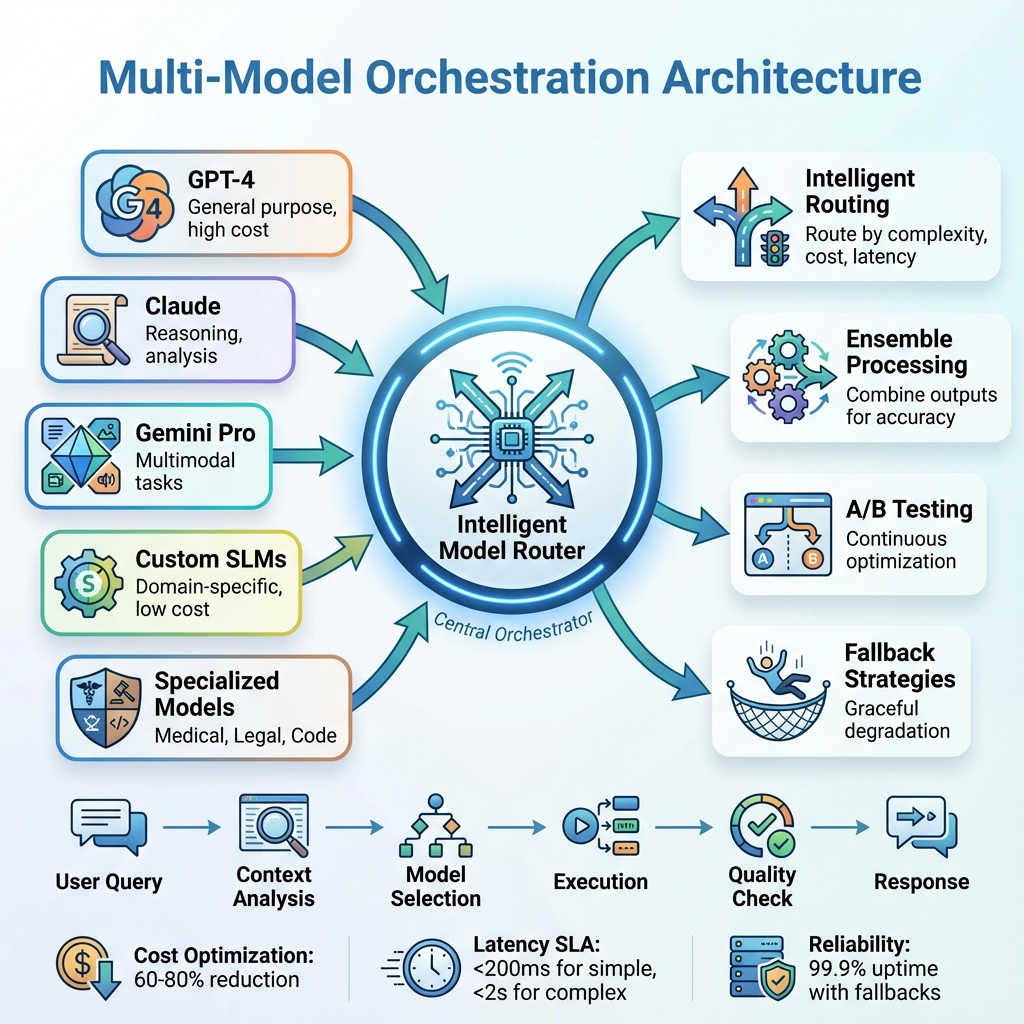

AI technology evolved so rapidly in 2025 that rigid architectures became obsolete within months. GPT-4 was state-of-the-art in January; by December, Claude 3.5, Gemini Pro, and dozens of specialized models had shifted the landscape entirely.

Organizations that thrived adopted composable, modular architectures built on these principles:

- Decoupled layers – Clean separation between data, model, orchestration, and presentation layers

- Standard interfaces – Contract-based APIs that allow component swapping without downstream changes

- Plug-and-play model endpoints – Models treated as interchangeable services behind consistent interfaces

- Vendor-neutral abstraction – No direct dependencies on provider-specific APIs

This approach enabled teams to:

- Swap OpenAI for Anthropic (or vice versa) in hours, not months

- A/B test different models for specific use cases with minimal code changes

- Avoid catastrophic vendor lock-in as providers changed pricing or capabilities

- Respond quickly to regulatory changes or compliance requirements

- Leverage specialized models (e.g., medical AI, legal AI, code generation) without architectural rework

✅ KEY PRINCIPLE: Every Component Must Be Replaceable

In a rapidly evolving AI landscape, architectural flexibility isn’t optional—it’s survival. Every component of your AI stack should be replaceable without cascading changes. Use abstraction patterns, standardized APIs, and avoid tight coupling to specific vendors or models.

Test your architecture: Could you swap your primary LLM provider in under 8 hours? If not, you have architectural debt that will cost you dearly as the AI landscape continues to evolve.

5. FinOps Became AI-Essential, Not Optional

AI infrastructure costs in 2025 shocked organizations unprepared for the economics of production AI. What cost $500/month in development exploded to $50,000/month in production. GPU compute, vector database operations, and API call volumes at scale created budget crises.

Successful teams adopted AI-aware FinOps practices:

- Real-time cost monitoring per model, per use case, per user cohort

- Automated optimization recommendations based on usage patterns

- Tiered model selection routing simple queries to cheap models, complex ones to expensive models

- Intelligent caching and prompt optimization to reduce redundant API calls by 40-60%

- Cost-per-transaction visibility for developers and product teams in real-time dashboards

Bottom line: Organizations that embedded cost observability into their AI platforms from day one avoided budget overruns and maintained sustainable AI operations.

What 2026 Demands: The Architect’s Checklist

Based on 2025’s hard-won lessons and 2026’s emerging trajectory, here’s what enterprise architects must prioritize to succeed:

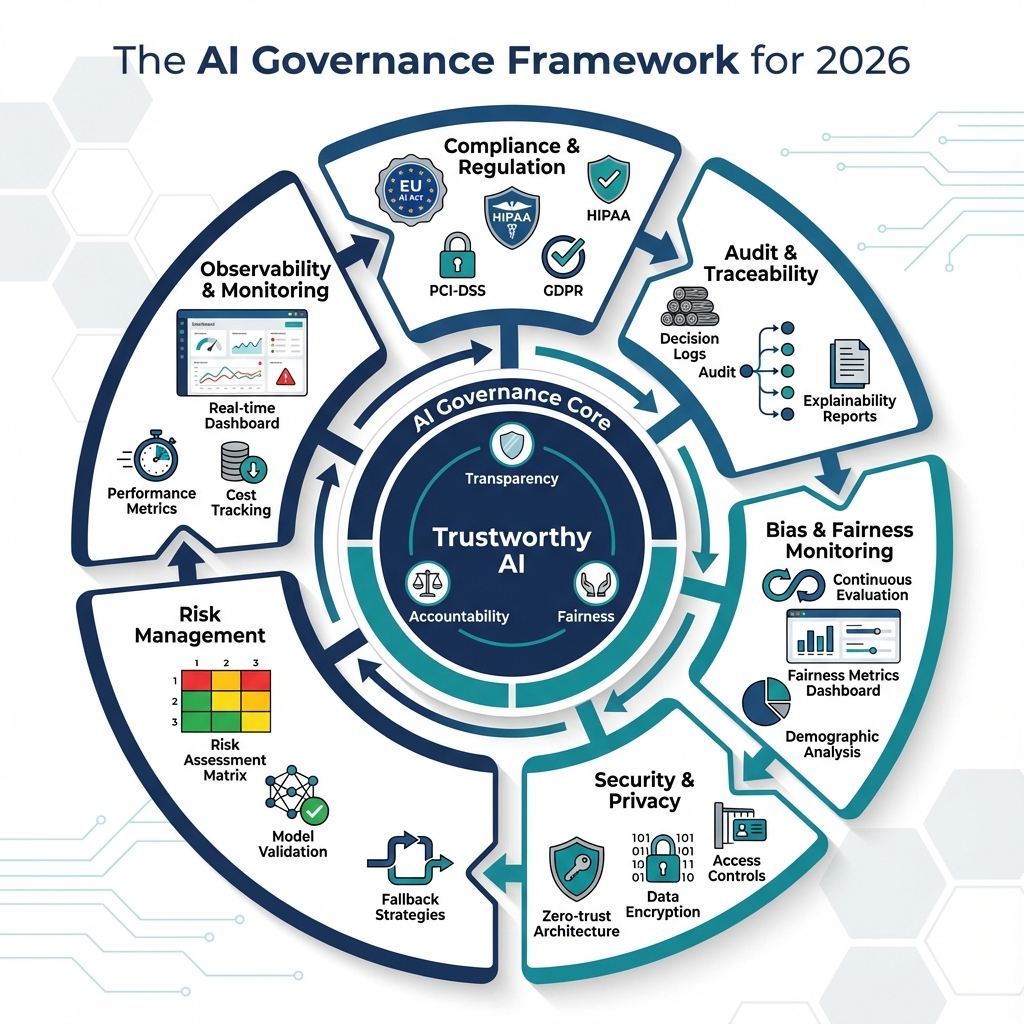

1. Governance-First Architecture

With the EU AI Act fully applicable in August 2026 and increasing board-level scrutiny of AI systems, governance is no longer a nice-to-have—it’s a regulatory requirement and competitive advantage.

Essential governance capabilities:

- Embedded compliance frameworks – HIPAA, PCI-DSS, GDPR, SOC 2 by design, not retrofit

- Real-time audit trails – Track every model decision, data access, and agentic action with full provenance

- Bias and fairness monitoring – Continuous evaluation of model outputs for discriminatory patterns across protected classes

- Explainability layers – Ability to explain why the AI made specific decisions to auditors, regulators, and end users

- Model risk management – Formal processes for model validation, approval, deployment, and retirement

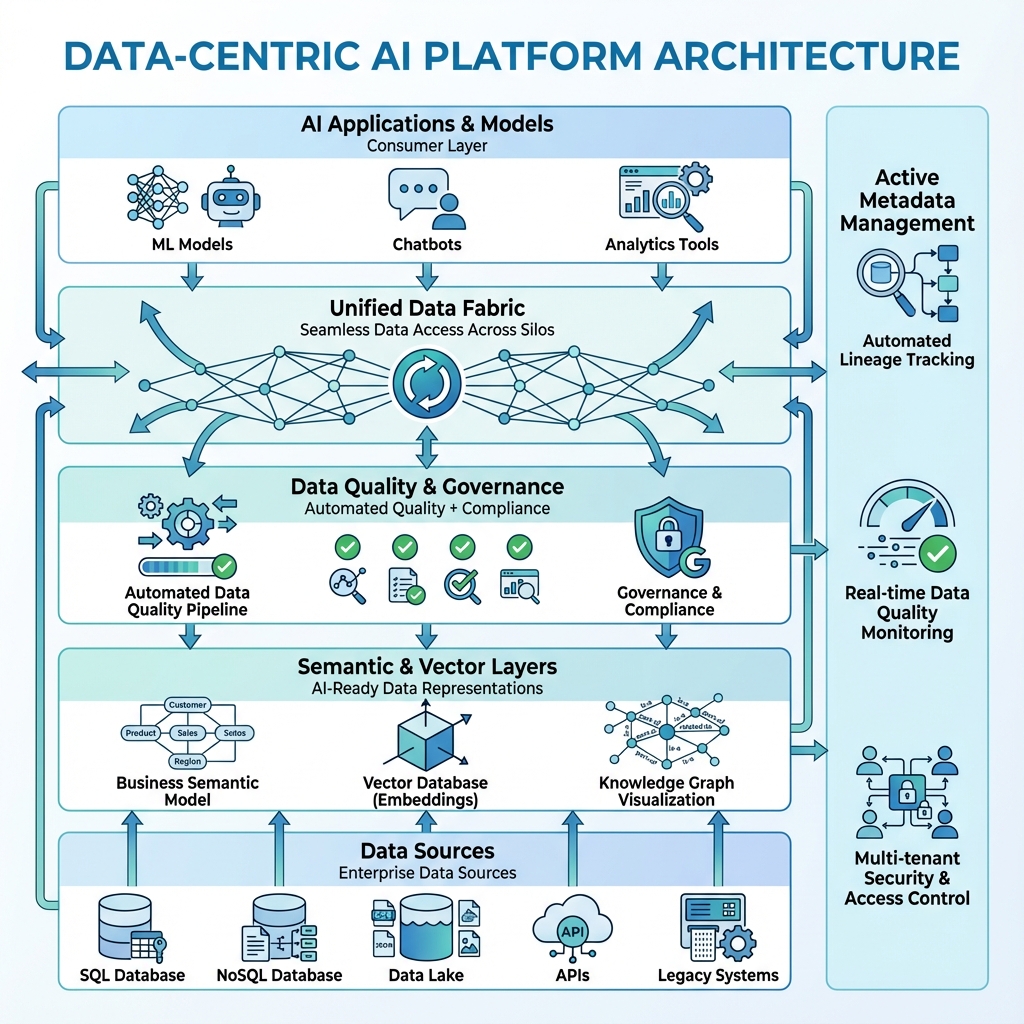

2. Data-Centric AI Platforms

The quality of your AI is fundamentally limited by your data architecture. World-class models on poor-quality data produce unreliable results.

World-class models on poor-quality data produce unreliable results. World-class models on poor-quality data produce unreliable results. Prioritize:

- Unified data fabrics – Seamless access across organizational silos while maintaining governance

- Automated data quality pipelines – Clean, contextualized, continuously validated data

- Semantic layers – Business-meaningful abstractions over technical data stores

- Vector database infrastructure – Purpose-built for embedding-based AI workloads at scale

- Active metadata management – Automated classification, lineage tracking, and impact analysis

3. Multi-Model Orchestration

No single model excels at everything. Design architectures that intelligently orchestrate multiple models:

- Intelligent model routing – Select the right model for each task based on complexity, latency requirements, cost constraints

- Ensemble approaches – Combine outputs from multiple models for higher reliability and accuracy

- Graceful degradation – Fallback strategies when primary models fail or are unavailable

- Continuous A/B testing – Infrastructure for ongoing experimentation and optimization

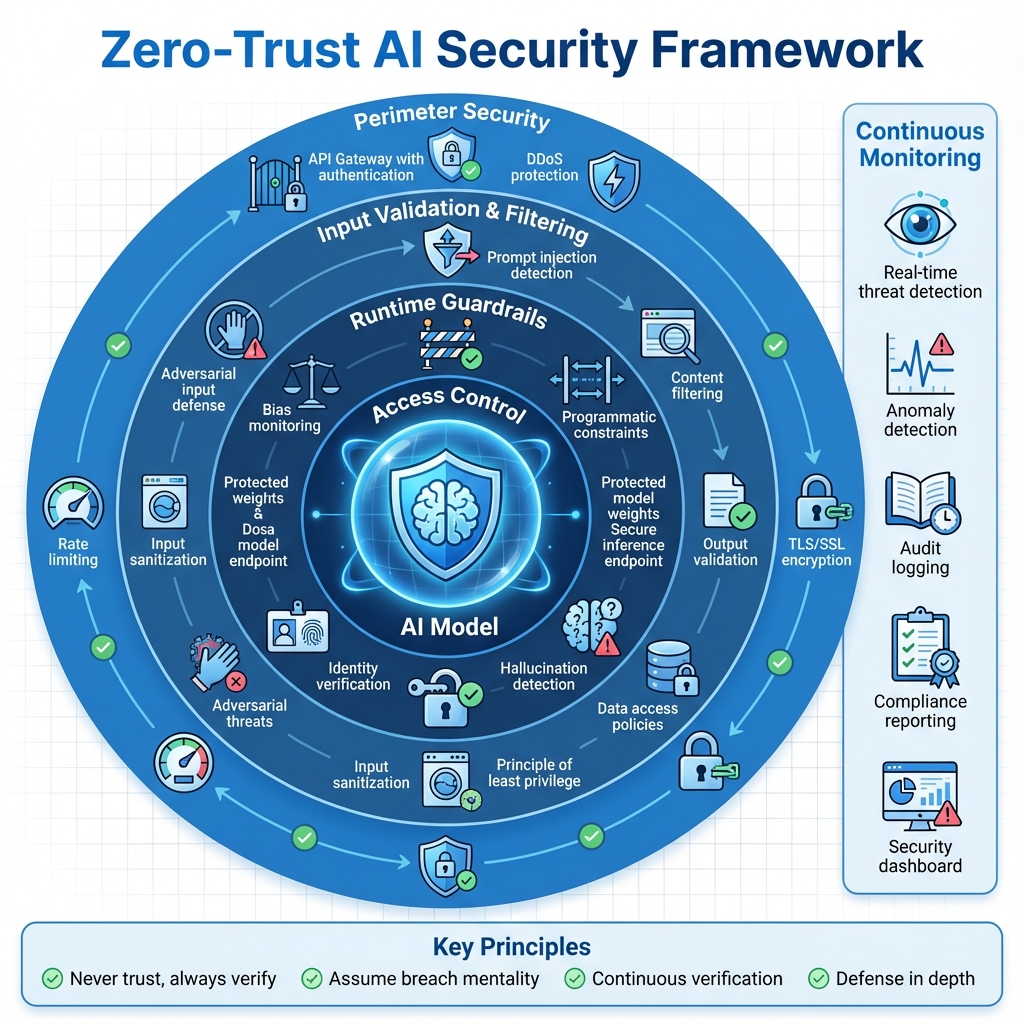

4. Zero-Trust AI Security

AI systems introduce new attack vectors. Apply zero-trust principles:

- Continuous verification – Never trust model inputs or outputs implicitly

- Runtime guardrails – Programmatic constraints on AI behavior (content filters, allowed actions)

- Prompt injection defenses – Protection against adversarial prompts attempting to manipulate model behavior

- Fine-grained access controls – Principle of least privilege for AI systems accessing enterprise data

- Secure model serving – Protect model weights and inference endpoints from extraction attacks

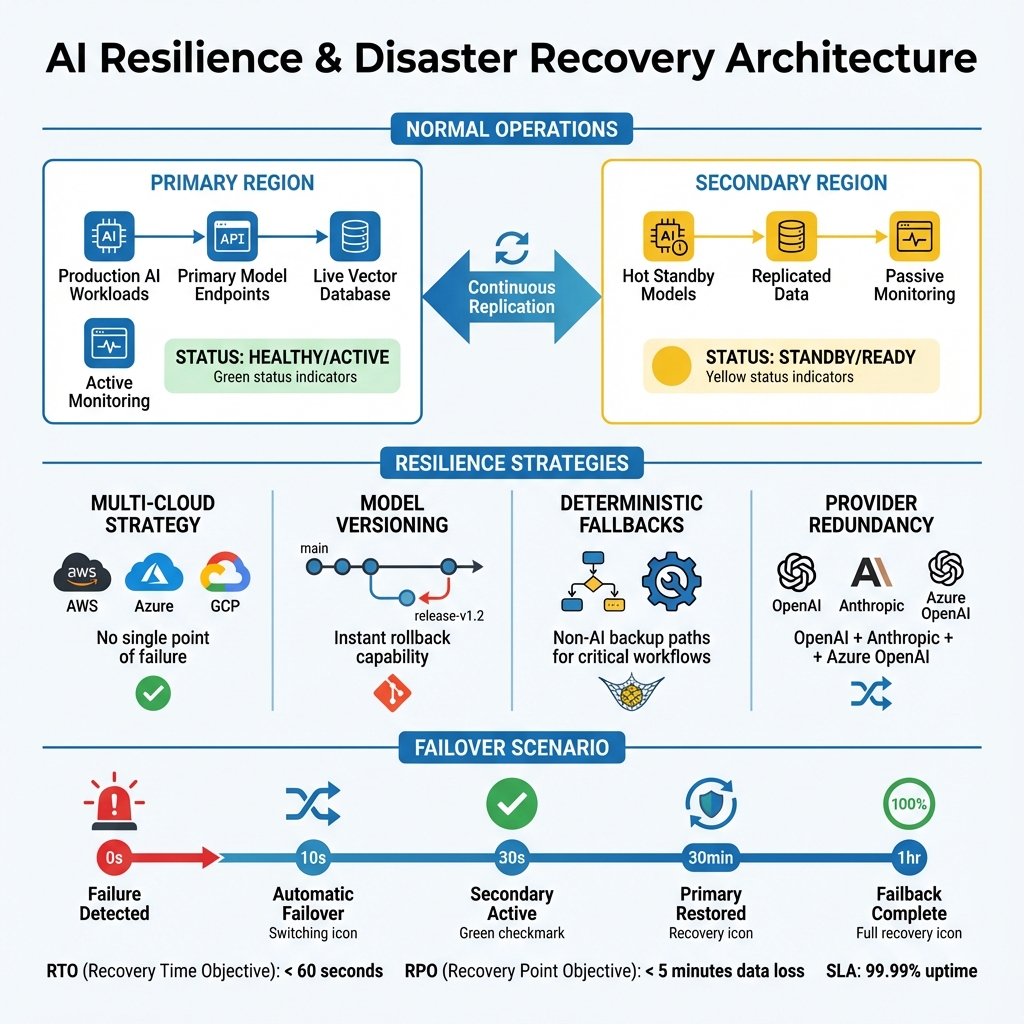

5. Resilience and Disaster Recovery for AI

AI systems need the same resilience patterns as traditional systems—with AI-specific considerations:

- Multi-cloud/multi-vendor strategies – Avoid single points of failure

- Model versioning and rollback – Ability to quickly revert problematic deployments

- Deterministic fallbacks – Critical workflows should have non-AI backup paths

- Provider outage planning – What happens when OpenAI/Anthropic/Google has downtime?

- Performance degradation monitoring – Detect and respond to model quality drift

The Human Element: What Doesn’t Change

Amid all the AI transformation, the fundamental responsibilities of enterprise architects remain unchanged:

- Pragmatism over hype – Question every “revolutionary” technology claim with healthy skepticism

- Business value first – Technology serves business outcomes, not the reverse

- Risk management – Every architectural decision is a risk/reward trade-off requiring explicit consideration

- Long-term thinking – Today’s shortcuts become tomorrow’s expensive technical debt

- People and process – The best architecture fails without organizational buy-in and operational discipline

- Continuous learning – The AI landscape evolves monthly; architects must evolve with it

📌 THE BOTTOM LINE FOR 2026

2026 will be the year AI moves from “shiny new thing” to “expected capability”. The architectures that succeed will be those that treat AI as they would any other enterprise capability: with rigorous governance, thoughtful integration, continuous monitoring, and unwavering focus on measurable business value.

We’re past the point of asking “Should we use AI?” The question now is: “How do we build AI systems that are trustworthy, cost-effective, compliant, and resilient enough to bet our business on?”

That’s the challenge—and the opportunity—for 2026. For those of us who’ve spent decades building enterprise systems, it’s familiar territory, just with new tools and higher stakes.

About the Author

Nithin Mohan T K is an Enterprise Solution Architect and Solutions Engineer with 20+ years of experience building scalable, resilient systems across healthcare, financial services, and cloud platforms. Specializing in AI/ML, Azure, AWS, Generative AI, LLMOps, AIOps, MLOps, and TOGAF-based enterprise architecture, Nithin writes about the real-world challenges of moving cutting-edge technology into production environments that businesses can rely on.

This article reflects two decades of practical architecture experience and is intended for professionals evaluating production-ready AI systems at enterprise scale. All views are based on observed industry patterns and hands-on implementation experience.

Keywords: Enterprise Architecture, AI/ML, LLMOps, Generative AI, RAG, Agentic AI, Cloud Architecture, FinOps, AI Governance, HIPAA, PCI-DSS, GDPR, Azure, AWS, Production AI, Scalability, Resilience, Cost Optimization, Vector Databases, Model Orchestration, Zero Trust AI

Discover more from C4: Container, Code, Cloud & Context

Subscribe to get the latest posts sent to your email.